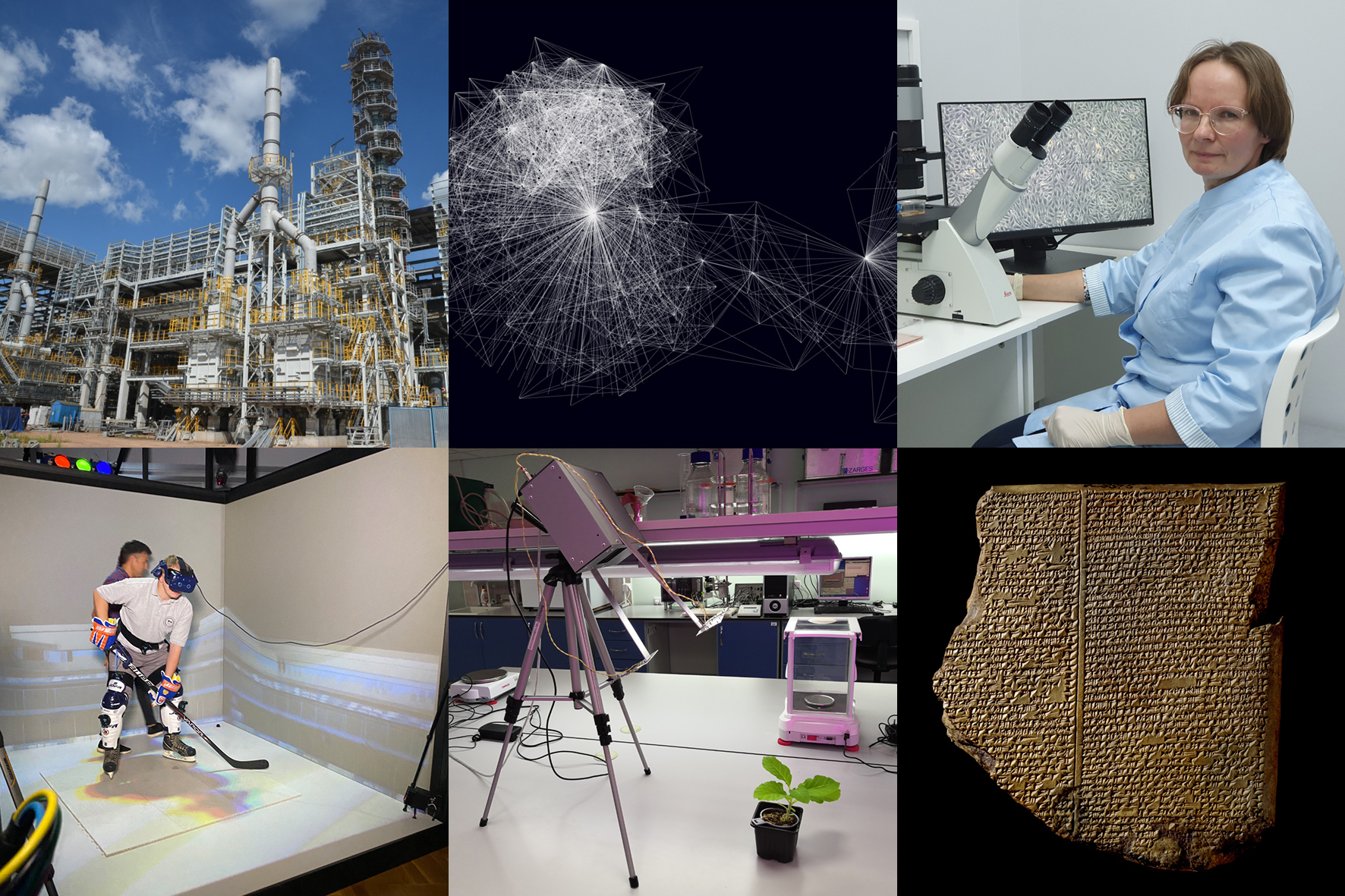

You are an entrepreneur and—all of a sudden—your company receives a barrage of negative reviews on the Internet. Who is leaving this negative feedback: bots or real people? Scientists have developed a ground-breaking new method for identifying groups of malicious bots on social media.

Bots are special computer programs that play a crucial role in social media, distributing advertisements and powering support chats. One bot can automatically replace an entire team of specialists, and they are often used to perform monotonous and repetitive work at the fastest speed possible. Some types of bots are also used for unethical activities, such as manipulating ratings, writing fake reviews and spreading misinformation. They are hard to spot as many bots are highly successful at mimicking human behaviour.

Research on bots is usually based on the texts they have generated, yet this method provides little information about bot activity. When scammers start forging documents and drawing numbers, they are violating the laws of statistics. The same happens when someone creates bots on social media. These mistakes are what led the experts at the St. Petersburg Federal Research Center to develop a method for detecting them on various social networks, including Russia’s largest, VK.

Researchers are also analysing the networks that result from bot interactions. For example, if you create a social networking account, you first make friends with your classmates, course mates and colleagues, and they make friends with one other, forming information clusters. Bots also create clusters, but they are not natural and are easily identifiable with the new tool that has a recognition accuracy of up to 90 per cent.

The method also helps analyse the contents of the communication campaign: the number of bots, their cost and the sequence of events, thus allowing the entrepreneur to assess the reputational damage and take effective action in response.

Picture: Visualization of a network of friends’ relationships. Image courtesy of the study authors.

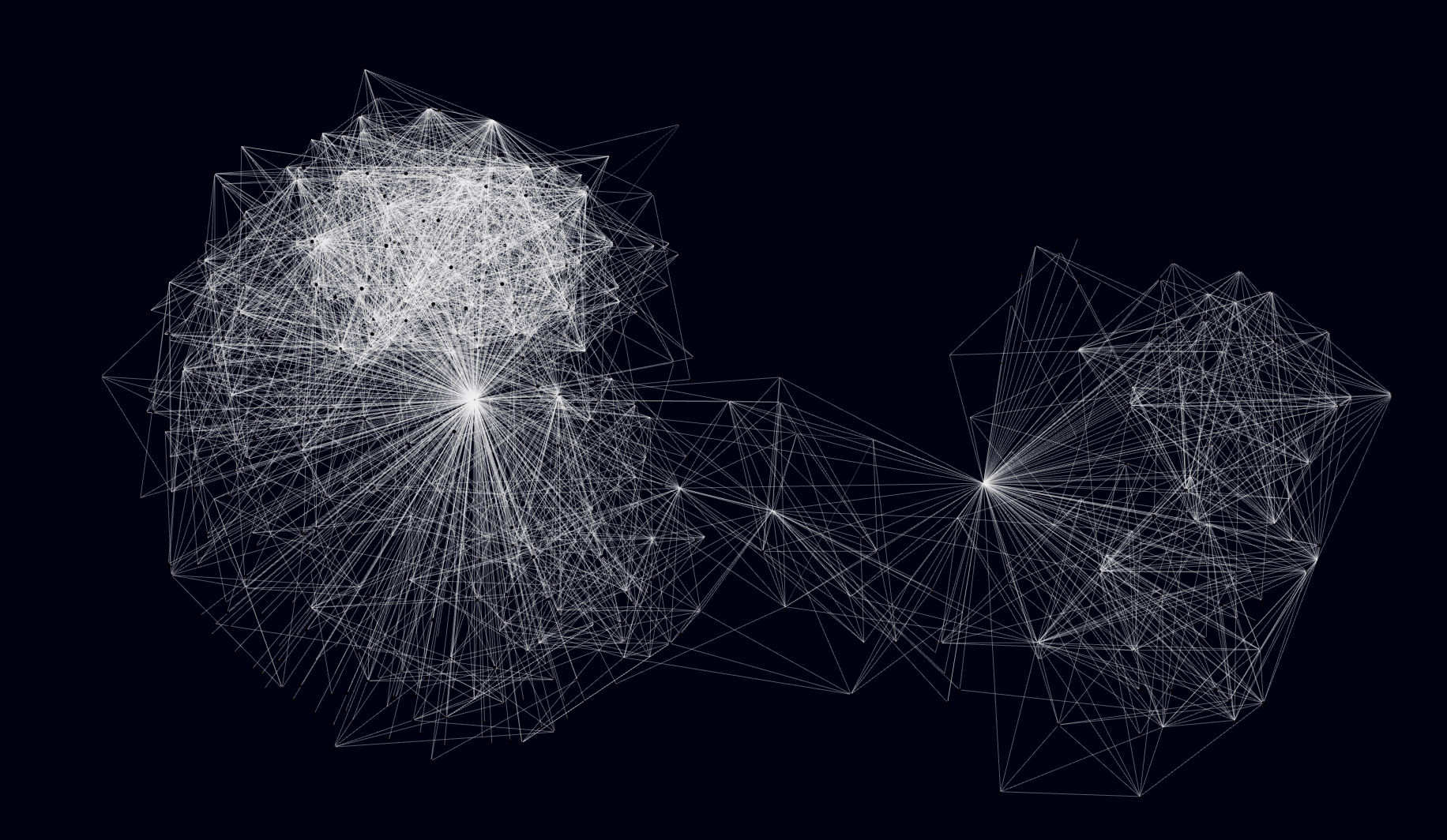

Imagine a smartphone that contains an unusual sensor, a spectrometer, that can analyse the air a person exhales and alert the wearer to possible illnesses at an early stage. A miniature and cheap basis for such a sensor—an optical comb source—has been developed by Russian scientists in collaboration with their colleagues from Switzerland.

There is a laser that emits a single wavelength and an optical comb similar to the emission of multiple lasers; their wavelengths form a “frequency pattern” (a linear spectrum) rigidly linked together. It resembles a ruler and can be used to measure many things. For example, it can be used in ultra-precise clocks, navigation systems and other complex devices. Such signals have been used in scientific research for more than 20 years, but the laser systems previously used to create them were too cumbersome for everyday use. To solve the problem, the scientists turned to optical microresonators—rings or discs of a special transparent material ranging in size from a few millimetres to fractions of a millimetre, inside which light can glide for a very long time, reflecting off the walls at a small angle. Under certain conditions, the light inside such a resonator turns into a set of very short pulses that produce stable comb-like spectra.

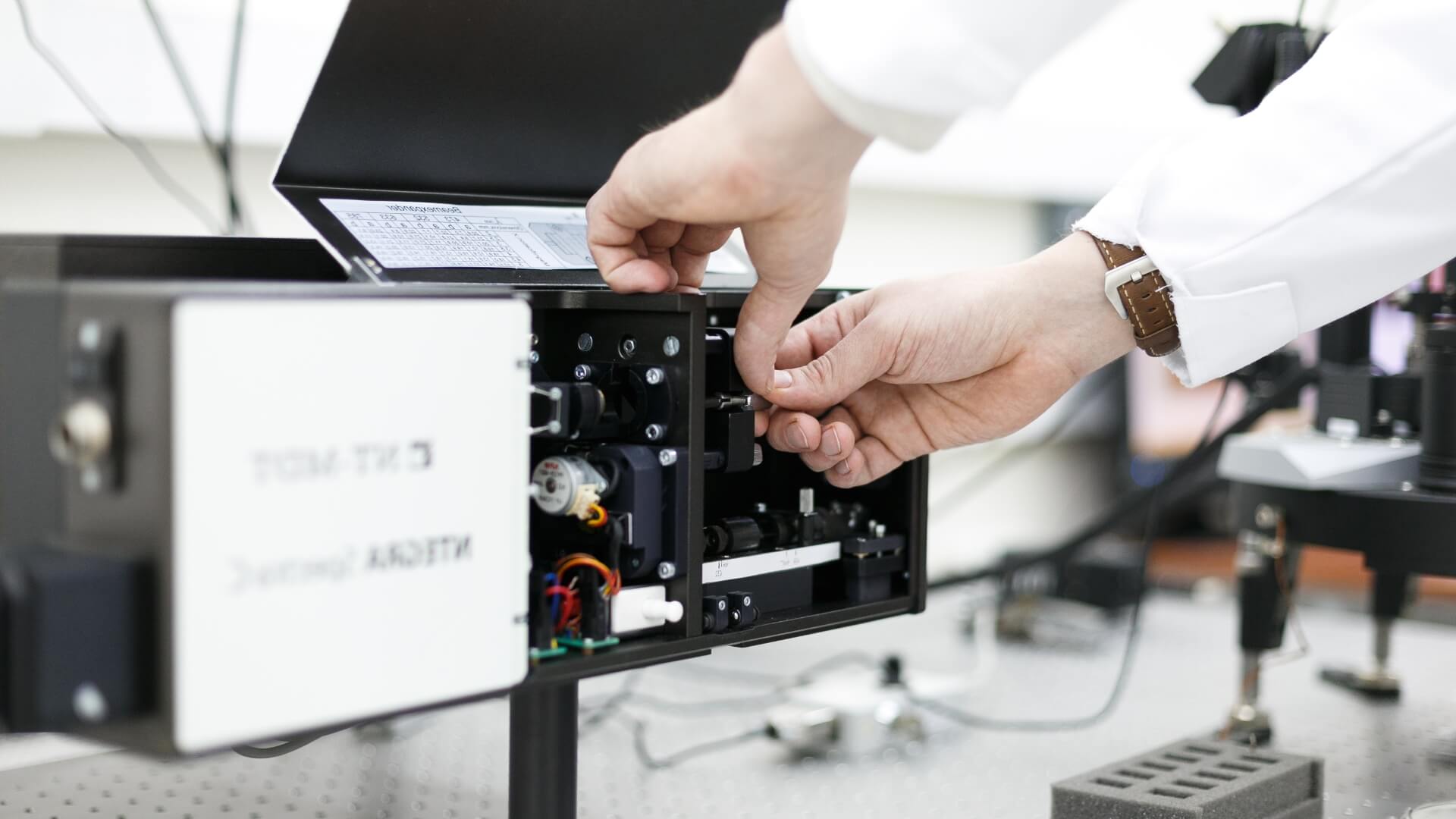

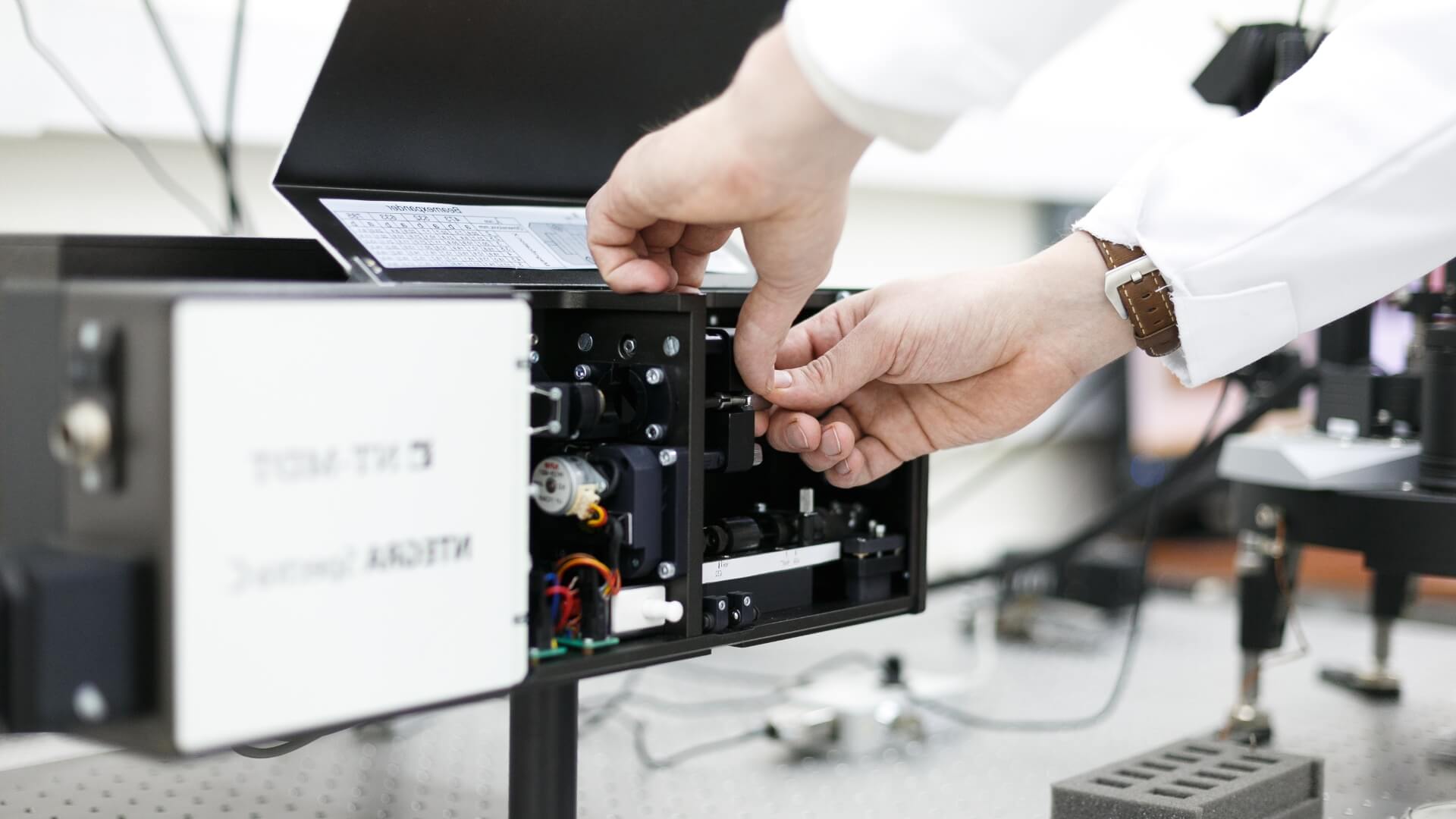

Recently, researchers from the Russian Quantum Center, together with foreign colleagues, managed to combine a semiconductor laser (almost the same as in laser pointers) and a unique optical microresonator manufactured using integrated technology, thus creating an optical comb source. It is extremely stable and tiny and can be powered by a simple battery. In addition to providing the basis for many useful sensors, including those for mobile devices, it can replace lasers in high-speed data transmission.

The scientists are now optimizing the new optical comb source in collaboration with Samsung in Russia and plan to bring the new product to market soon.

The optical comb source. Source: Russian Quantum Center.

In order to empirically demonstrate the theoretical possibility of effective resonant energy transfer through excitonic transitions in systems with dipole–dipole energy transfer, a collaborative team of experts that included scientists from the National Research Nuclear University MEPhI, Moscow Institute of Physics and Technology, Sechenov University, Shemyakin and Ovchinnikov Institute of Bioorganic Chemistry, the University of Southampton (UK), the University of Reims Champagne-Ardenne (France), Donostia International Physics Center (Spain) and the Basque Foundation for Science (Spain) was needed. The project was supported, among other benefactor, by the Russian Science Foundation.

The interaction of light and matter at the quantum level remains an important area of research at the intersection of chemistry and physics since the 1950s. Excitons—an auxiliary object of quantum theory (quasiparticles) whose behaviour describes the bound state of a pair of carriers of opposite charges, an electron and a hole—were theoretically described by the Soviet physicist Jakob Frenkel back in 1931; the experimental proof of the theory describing excitons came 20 years later. The concept of excitons is now actively used to study effects in organic semiconductors, including a Förster resonance energy transfer (FRET), the lossless energy transfer between two closely spaced organic molecules under the influence of resonance. The theory predicts the so-called “carnival effect”—control over the direction of FRET energy transfer between excitons of two molecules, up to and including the possibility of a dramatic change in that direction. The study confirms the possibility of such control through the “strong coupling effect,” which is the formation of a hybrid energy state between excitation in matter and localized electromagnetic excitation.

Above all, the results of the collaboration pave the way for increasing the efficiency of photovoltaic devices that convert light energy into electricity, as some of the excitonic states in organic semiconductors are channels of energy loss. That is, the direct result of the discovery could be a manyfold increase in the efficiency of certain types of solar panels, as well as of organic LEDs meaning that today’s generation of OLED displays could quickly become a thing of the past. But it seems that we are talking not just about new flexible displays and solar panels, as the potential for discovery extends much further and includes the ability to precisely control chemical reactions remotely, as well as to develop optically controlled imaging technologies in medical diagnostics.

Image courtesy of the authors’ research.

Today’s oil refiners and petrochemists are faced with the challenge of transforming oil as fully as possible into usable products—motor fuels and petrochemical feedstocks that can be used to manufacture fabrics, plastics and much more. First of all, it is necessary to develop new technologies for processing heavy oil residues, in particular tar, which Russian scientists have managed to do. To test it, Tatneft created a pilot plant in 2021 that cost the company 10 billion roubles.

The output of the refining process is gasoline, jet kerosene and diesel fuels. After refining almost any oil, and especially the heavy crude that now dominates the market, we are left with tar—a residue with practically no applications. It is usually processed into coke in factories, which is then combusted to produce large amounts of carbon dioxide. It is hard to turn the whole amount of tar into other useful products using conventional methods as the conditions for conversion are too strict, and the efficiency of the processes involved is too low. For several years, researchers at the Topchiev Institute of Petrochemical Synthesis of the Russian Academy of Sciences have been working on this problem, and they have come up with fundamentally new catalysts (accelerators), as well as a process that ensures record refining depth of tar and heavy oils (over 93 per cent) into fuel and feedstock for petrochemicals.

The researchers solved several problems at once. First, they created catalysts whose particles were reduced to the size of heavy feedstock molecules so that the molecules do not poison the catalysts and do not interfere with their operation. This allows for very small quantities of the catalyst to be used (0.05 per cent by mass) at low pressures (up to 100 atm). Second, they have worked out how to create catalysts from the simplest ingredients directly in the feedstock before or even during the reaction, which is the dream of every chemist.

Scientists have even shown that this method is also suitable for recycling plastic waste.

To test the technology under real conditions, Tatneft has built and commissioned a pilot plant for deep processing of tar and bituminous oil with a capacity of 50,000 tonnes per year. The results of the pilot runs will make it possible to start building a plant with a higher capacity, from a million tonnes of tar per year, which could subsequently be replicated in Russia, India, China, the Middle East and other oil producing countries.

The development and introduction of this new technology at Russian refineries will make it possible to achieve an oil refining depth of up to 95 per cent and eliminate the conversion of tar into coke. This will reduce carbon dioxide emissions and support companies on the path to decarbonization.

A pilot tar processing plant. Image courtesy of the authors of the study.

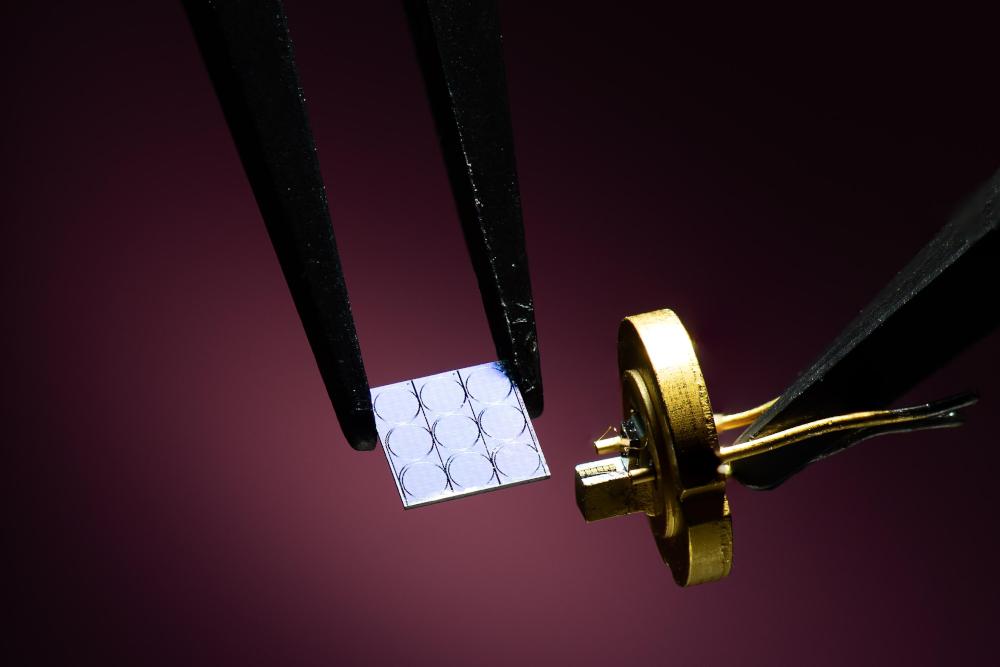

There are some diseases that cannot be treated without operations. One of them is aortic valve calcification, which affects around one out of every hundred people. Russian and American scientists have figured out how to use machine learning algorithms to find a potential cure, which they duly found, and then proved its effectiveness on animals.

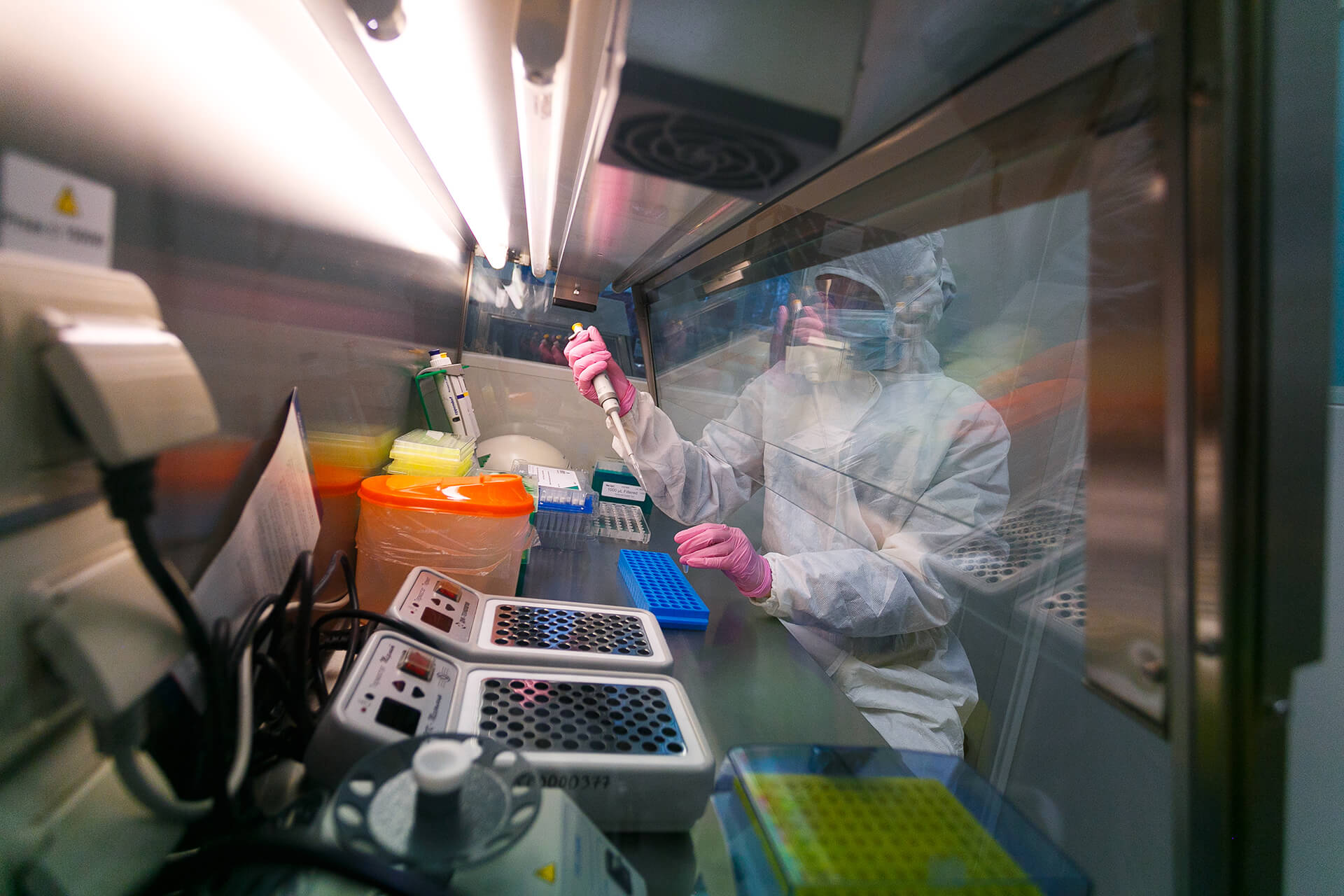

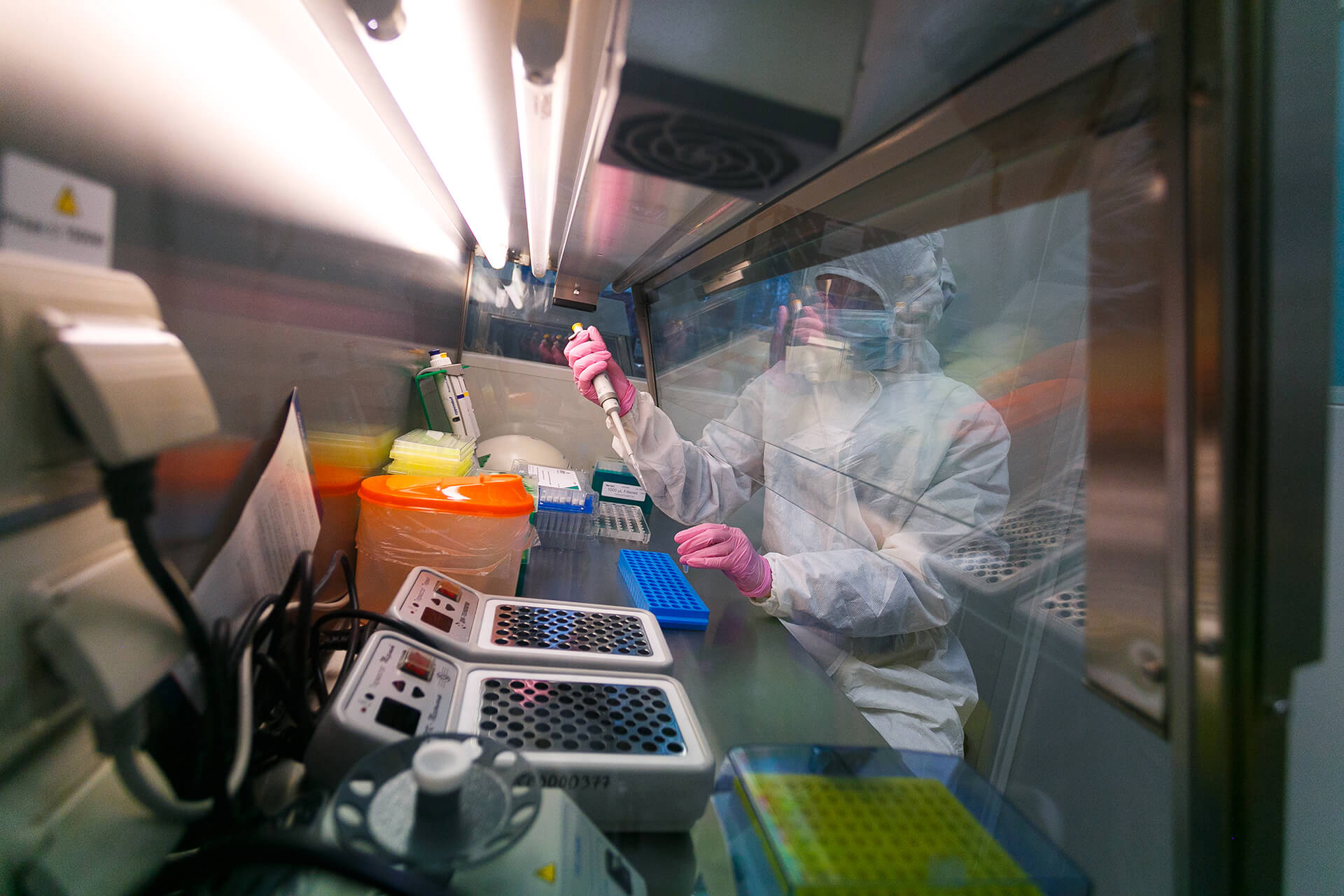

When calcium metabolism is disrupted in the body, certain tissues become calcified i.e. oversaturated with calcium. Researchers from the California Institute for Regenerative Medicine, the Institute of Cytology of the Russian Academy of Sciences and the Almazov National Medical Research Centre have discovered a mutation that is associated with aortic valve calcinosis. They assembled a base of 1500 components that could potentially be a cure for the disease. The future compound would affect the genetic network of a sick person and change it to normal. The scientists figured out how to use machine learning algorithms to search for the right compounds, found a number of such substances and tested their effectiveness on a complex cell model made of stem cells simulating the disease.

The experts then tested the chosen substances on the cells of the patients in treatment. These are quite complex cell cultures, which only a few laboratories in the world have. The scientists found a suitable compound—XCT790—and carried out pre-clinical tests on mice. The compound was shown to be effective in treating aortic valve calcification and caused no significant side effects.

Adapting this approach for other complex diseases would help develop new medicines more quickly.

Anna Malashicheva, project leader and one of the authors of the study, tests the compound on the cells of patients with aortic valve calcification. Photo from personal archive.

Surprisingly, even at the beginning of the 21st century we had practically no idea how our bodies detect heat or cold. While the cells and cellular structures responsible for the skin thermoreceptors as such have been known to histologists since the 19th century, the physical principles of their operation are the subject of the 2021 Nobel Prize in Physiology or Medicine awarded to David Julius and Ardem Patapoutian for identifying receptors that allow the body’s cells to sense temperature and mechanical stimuli, and for deciphering the biochemical principles of their functioning. In fact, the mystery of the mechanisms responsible for thermoreception, which is also linked to the senses of touch and to a certain extent pain, has preoccupied scientists on all levels for the last decades. In particular, many physiological responses are based on sensing temperature by triggering a specific group of ion channels in the transient receptor potential (TRP) family. The functions of these channels have been studied extensively, but it is only now that ways of analysing them are beginning to yield practical results.

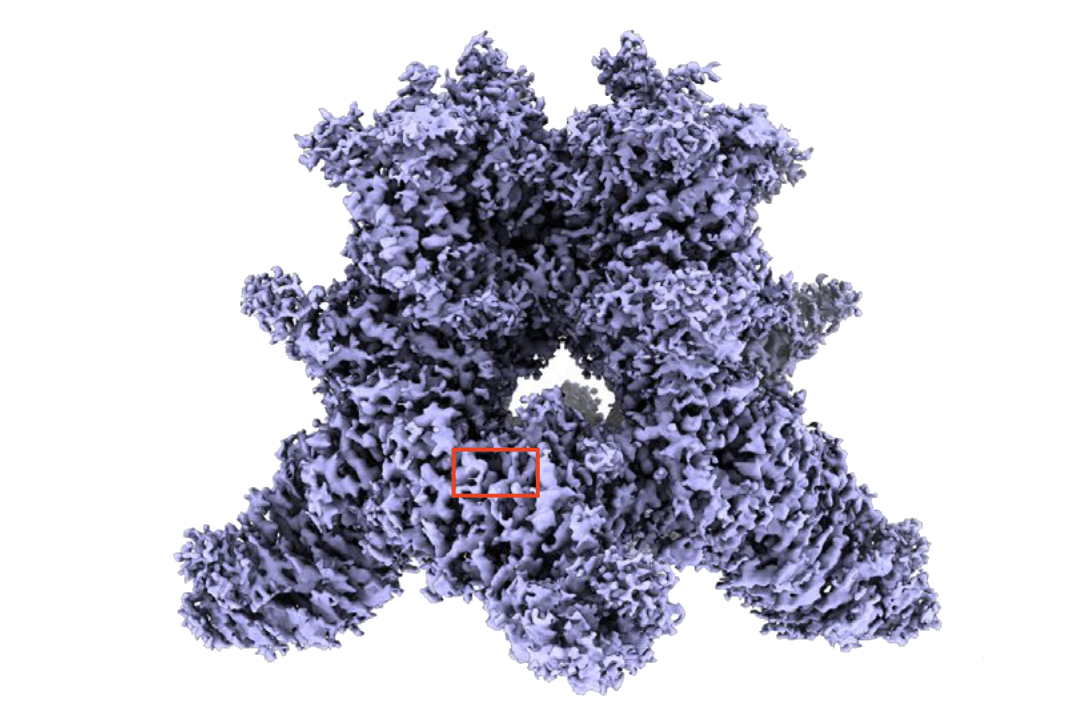

An international team made up of researchers from Columbia University (New York), the University of Illinois (Peoria), and the Institute of Physiology of the Czech Academy of Sciences (Prague), and Russian structural biologists from the Shemyakin and Ovchinnikov Institute of Bioorganic Chemistry of the Russian Academy of Sciences, the National Research Nuclear University MEPhI, HSE University and the Moscow Institute of Physics and Technology (MIPT), has determined the structure of a thermoreceptor (TRPV3) in closed, transiently activated and (for the first time in the world) open states and identified the structural aspects of its activation at molecular level. The mechanism proved rather complex: an increase in temperature induces structural changes in the channel itself which are then transferred to the channel molecules bound to the cell membrane lipids.

What, in and of itself, will the study of thermoreceptors yield? In theory, there is a real opportunity to create, on a rational basis, a fundamentally new type of antipyretic and analgesic. But not only that: basic research of this kind solves the problem of a lack of understanding of detailed physiological mechanisms. This paves the way for future complex technologies, the nature of which we do not yet fully understand, but which we know for certain have never been left without development in the history of humankind.

Structure of a TRPV3 thermoreceptor. Source: Kirill D. Nadezhdin et al. Nature Structural & Molecular Biology, 2021.

Biosensors are the cutting edge of interdisciplinary science at the interface between biochemistry and physics. The essence of even the basic technologies currently used in biosensors is rather difficult to explain. A brief description of a research project supported by a grant from the Russian Science Foundation sounds rather scary: it is about developing SERS aptasensors based on the effect of plasmonic amplification in Raman spectroscopy for the quantitative detection of viral particles.

In practice, as with everything in modern science, we are talking about things fundamentally known decades ago. Raman spectroscopy is a method of studying chemical reactions remotely. Chandrasekhara Raman received the Nobel Prize in 1930 for the discovery of the effect of non-uniform reflection of photons from the surfaces of different media in which chemical reactions take place. The very idea that one of hundreds of millions of photons may reflect differently from the rest, depending on what happens on the surface (more precisely, how molecular vibrations occur at the point where the photon hits), is very promising. Yet the Raman effect itself is very weak, though for chemists it is one of the main laboratory working methods, like the similar method of Fourier transform infrared (FTIR) spectroscopy. But much has changed with the discovery of surface plasmon resonance, which amplifies the Raman effect many times over, and SPR sensors, which have already been actively used in industry since the 1990s. Meanwhile, the technology has moved on from the macrocosm to the microcosm: the SERS effect proved to be quite applicable in microparticle research technologies. SERS aptasensors with DNA aptamers are capable of “signalling” the presence of particles of a particular device the size of a viral envelope capsid in the environment.

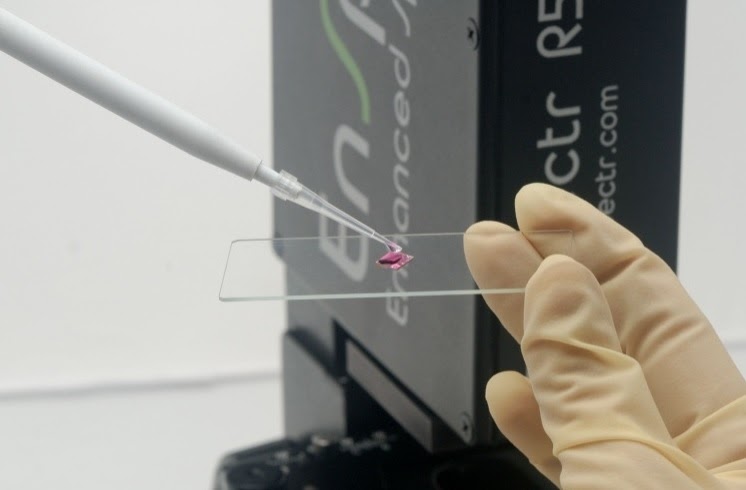

What can detect the presence of viruses in the environment will naturally be used these days primarily for virus detection: the subject of development in this case is SERS aptasensors for quantitative diagnosis of coronavirus particles in the sample, including SARS-CoV-2 coronaviruses. Moreover, the conditions for such technology are ease of use, the compactness of the diagnostic system (Raman unit) and the ease of sample preparation. Work on the aptasensors was carried out by experts from the RAS Institute of Solid State Physics, Lomonosov Moscow State University, Sechenov University, Gamaleya National Center, RAS Institute of Physiologically Active Substances and RAS Chumakov Federal Scientific Center. The newly developed technology meets these conditions, in particular, with a reaction time of 5 minutes and uniquely high detection selectivity. But what also follows from this is that the idea can be modified and scaled almost indefinitely: it is certainly a potential component of those fantastic “nanotechnology worlds” that futurologists dreamed a lot about in the 2000s, without much justification at the time. Biosensors for rapid quantification of a complex protein compound of a known structure are a technology whose prospects are almost impossible to assess. From such fundamental “building blocks” of knowledge, entire industries that we cannot imagine now could be built in the decades to come.

Material is being prepared for a SERS Raman spectroscopy and dynamic light scattering study. Image courtesy of the study’s authors.

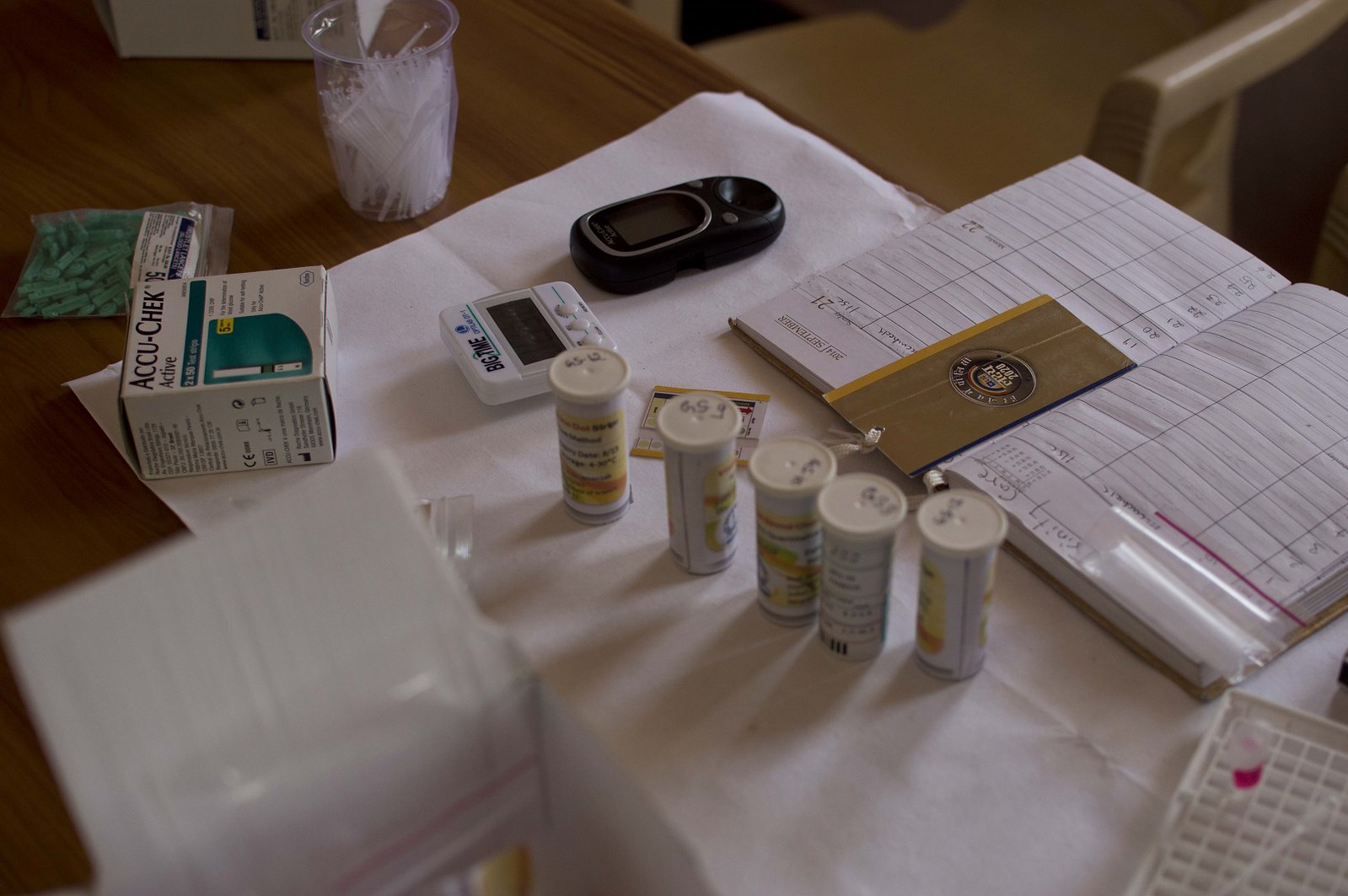

There are more than five million people with diabetes in Russia, of whom around 270,000 suffer from type 1 diabetes, the most severe form of the disease, which occurs in childhood, adolescence and young adults. Type 1 diabetes develops because of a lack of insulin, the deliverer of glucose (sugars) to the body’s cells. This is why people with the condition need daily injections of insulin. An inaccurate choice of insulin dose can lead to the development of hypoglycaemia, a difficult to predict and potentially dangerous complication. Medical professionals, together with mathematicians, have trained a computer to predict this kind of event. In the future, the software could be used as an app on a smartphone.

The most common and dangerous complication of type 1 diabetes is hypoglycaemia, i.e. a drop in blood sugar below normal levels. Such a drop can be particularly dangerous at night since a sleeping person does not always feel the symptoms of a drop in blood glucose levels and does not take action in time.

To develop a programme for predicting nocturnal hypoglycaemia, researchers from the Research Institute of Clinical and Experimental Lymрhology – Branch of Institute of Cytology and Genetics, Siberian Branch of Russian Academy of Sciences continuously measured glucose levels in 400 patients with type 1 diabetes and collected detailed clinical information about them—gender, age, insulin dose, diabetes complications, past glucose levels and biochemical indicators. A computer algorithm developed by researchers from the Sobolev Institute of Mathematics of the Siberian Branch of the Russian Academy of Sciences analyses this data in a particular patient and can predict the behaviour of glucose in their blood within the next 30 minutes. The prediction accuracy is more than 90 per cent. However, the scientists plan to improve it using the neural network method.

The programme can be useful for people who do not have a special insulin delivery system—a pump with an algorithm for predicting glucose levels—built in. Today, they form the majority of type 1 diabetes patients in Russia. In the future, the technology could become a smartphone app. The purpose of such an app would be to detect whether the blood sugar situation is becoming dangerous and give a signal to the patient, allowing them to take measures to prevent hypoglycaemia.

Source: Trinity Care Foundation.

Even experienced crop scientists are not always able to detect in good time the events that lead to disease and plant death. Today, this job is increasingly being done by remote monitoring devices, the development of which is a key focus in agriculture. Yet each system has its own limitations, which Russian researchers are trying to overcome. A stationary version of a prototype of such a device is ready, with a more advanced mobile system set to appear in the near future.

A team of scientists from Lobachevsky State University (Nizhny Novgorod), in collaboration with colleagues from the Institute of Applied Physics of the Russian Academy of Sciences, has developed a device that can detect plant problems at an early stage. The system will guess the “wants” of the plant at the first signs, imperceptible to the human eye, telling you when the soil is lacking moisture, what to fertilize the soil with, and which factors are likely to have a negative impact on the plant in the near future.

When the plant faces a stressful situation—drought, high temperatures, strong winds and other events—it begins to reflect less light in the green part of the spectrum. The device illuminates the plant with short bursts of yellow-green light that do not harm it, and measures the reflected light, which allows its physiological condition to be assessed. The stationary prototype device has two cameras with filters for transmitting light at two wavelengths: 531 nanometres (green) and 570 nanometres (yellow). On receiving the reflected signal from the plant, each of the device’s cameras produces two images: under normal light and with an additional flash of yellow-green light. The computer automatically calculates the difference between the second and first images for each of the cameras—for the green and yellow spectral bands. Based on these differences in each pixel, a special index, the photochemical reflection index (PRI), is calculated. It is the value and spatial distribution of the index that are the main sources of information on the stress effects on the plant. The flash of the device allows for more accurate measurements of the index and the identification of its different components, making the method more reliable and informative.

This means that once a person is aware of any problems in the plant, he or she can take appropriate action in time to save the crop. A stationary version of the prototype device for use in greenhouses is already in place, and a mobile solution with broader functionality will be available in the near future. There are plans to introduce the project to agro-industries and agricultural enterprises.

Image courtesy of the study authors.

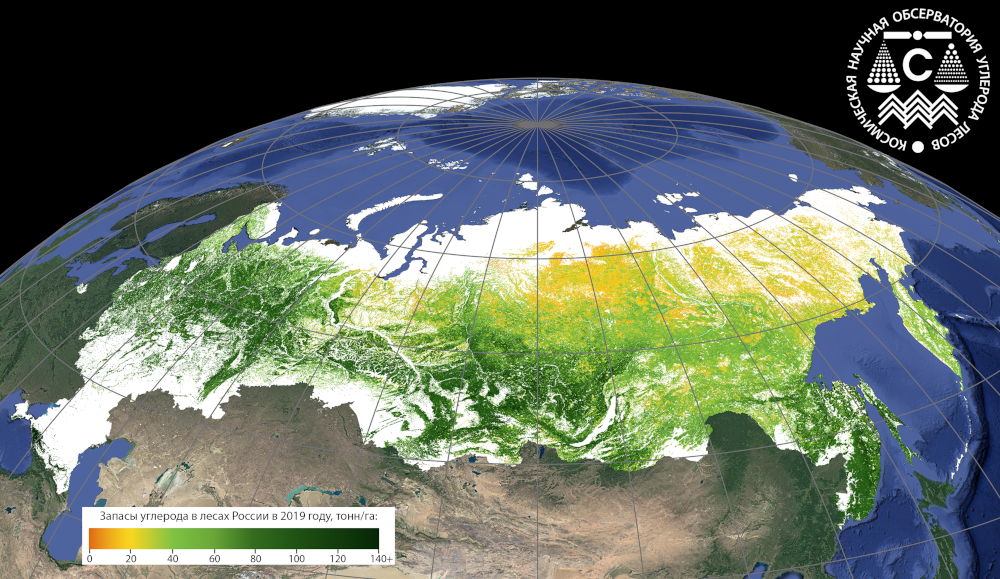

The climate is changing, challenging humankind to change, too, in order to slow down the Earth’s destructive processes. But in order to meaningfully reduce greenhouse gas emissions, the absorption capacity of our forests needs to be properly accounted for. How much carbon is stored in the forests now, and at what rate do they absorb it? For the first time in Russia, the issue has been studied globally using satellite systems, and it has been found that forests can absorb more carbon than previously thought. The algorithms and data processing methods developed will enable the government and the industrial sector to meet the challenges of reducing their carbon footprints.

In response to climate change, a number of countries, including Russia, are implementing policies to cut greenhouse gas emissions into the atmosphere. Forests absorb carbon, but in order to calculate their contribution, we need to know their absorption capacity with a high degree of accuracy and provability. Previously such monitoring was carried out by means of ground surveys, which did not cover the entire territory of the country and did not allow the necessary information to be collected on a regular basis. A team of researchers from the RAS Center for Forest Ecology and Productivity, the RAS Space Research Institute and Siberian Federal University has, for the first time ever, conducted large-scale carbon monitoring in all forests in Russia using satellite data and image processing algorithms they have developed. The scientists took different forest characteristics, such as their timber reserves, species composition, age and productivity indicators, into account. They looked not just at the forests that usually make it to the state registers, but also at forests on abandoned agricultural land and sparse forests in the north, as well as some other sites.

The scientists calculated carbon stocks and their dynamics, and found that the carbon stock and its absorption rate by forests is much higher than currently used in official documents.

In the future, such monitoring will make it possible to address both governmental climate objectives, and those of the industrial sector. By engaging in fire protection, cultivation and conservation projects, companies can offset their carbon footprint.

Image courtesy of the authors of the research.

Every year, archaeologists find tens of thousands of different artefacts from ancient times. Yet discovering them is often far easier than restructuring history from them. A team of Russian and foreign scientists, who ten years ago identified a previously unknown extinct subspecies of humans—the Denisovans—has found a way to examine artefacts more quickly and accurately. This, in its own revolutionary way, will help address many complex and important issues in human evolution.

Today, scientists analyse archaeological finds not only by their external appearance, but also by looking inside, at their DNA. This is found in bones and teeth, which are very hard to find, as burials were made outside the sites where the humans lived. But even these finds give little information for analysis.

Researchers from the Institute of Archaeology and Ethnography of the Siberian Branch of the Russian Academy of Sciences and the Max Planck Institute for Evolutionary Anthropology (Germany) have come up with a solution. They took DNA from cell nuclei found in the soil of caves and applied a completely new approach to processing them. They “read” the genome and compared it step by step with the information in the genetic database. The scientists separated human genes from animal genes and thereby reconstructed information about the people who inhabited the Chagyrskaya and Denisova caves in Russia and the Atapuerca Cave in Spain some 50,000 years or more ago. The data has been cross-checked with DNA from the bones and corroborated the conclusions.

The DNA traces enable the study of entire populations of ancient people and the relationships between them, thus shedding light on many controversial and complex questions of human evolution.

Chagyrskaya Cave. Image courtesy of the authors of the study.

Another study also seeks to add to our understanding of the prehistoric period. Scientists from the South Ural State University are developing an equally revolutionary technology—a rather large-scale, multidisciplinary study of mobility and migration from archaeological and geochemical data using stable isotope analysis—as part of the project “Human Collective Migrations and Individual Mobility in a Multidisciplinary Analysis of Archaeological Information (Bronze Age of the South Urals)”.

The researchers in Chelyabinsk are working on an idea that has apparently crossed every archaeologist’s mind and was dismissed with the words “not in this century” as too technologically complicated. There is an array of data on funerary rites and categories of inventory in the burials in the South Urals that is quite large and detailed. And it is possible to create a map of detection in different concentrations of strontium isotopes in the same area, examining the ratio of these isotopes in the samples in the same burials. This relationship is a potential source of information about the mobility and migrations of human collectives during the Bronze Age. If we combine the geological, “isotopic” and “archaeological” maps, we can test many of the hypotheses that experts have made regarding the giant migrations of this time in Eurasia. And here we can rely on statistical methods, which in fact only came into archaeology in the second half of the 20th century, but which have always promised crucial results.

Today, we can only guess as to what actually happened at the beginning of the 2nd millennium B.C. on the territory of what is now the Chelyabinsk Region. All we know is that what was happening was apparently an important part of future history, including ethnohistory, of the entire continent and perhaps beyond. Reliable explanatory models of major migrations in prehistory are almost non-existent, the debate about what drove them and how they were organized has occupied scientists for at least a century and a half and there seems to be no prospect of resolving these disputes using previous methods. This and similar work can probably give us a more or less reliable picture—the research is challenging, complex and costly, but the potential outcome looks extremely important. Almost everyone knows at least something about Indo-European migrations, but we need to know more, especially now that we have the chance to explore the issue much more precisely.

Fragment of a defensive system at the time of excavation. The Stone Barn settlement (Bronze Age). Source: A. Senokosova

The real possibilities of corpus linguistics only became clear in the 21st century with the dramatic reduction in the price of computing technology and the ample opportunities to bring together texts scattered in databases in hundreds of volumes. Forty years ago, this possibility was obvious yet unrealistic, as a systematic and sufficiently complete corpus of texts from even pre-Antique writing traditions seemed to be a purely theoretical construct. Meanwhile, such corpora are badly needed not only by linguists and historians, but also, for example, by folklorists, as the migration of stories and motifs in cultures is still a subject of purely “manual” work, largely dependent on the unique capacity of the individual specialist, their expertise developed over several decades of hard work. And yet, some of the work that can be done with a marked-up corpus of textual primary sources is impossible, even for a brilliant scientist. This requires a database and relatively simple computer technology in terms of IT, but something practically impossible to carry out “in one’s head.” For example, a team of graduate students can count in a good library the number of rhymes of a strictly defined type in Russian poetry of the last quarter of the 18th century and compare them with French poetry from the same period. To do something similar with 40,000 texts, even short ones, without a corpus is no longer possible: such armies of graduate students are unimaginable today.

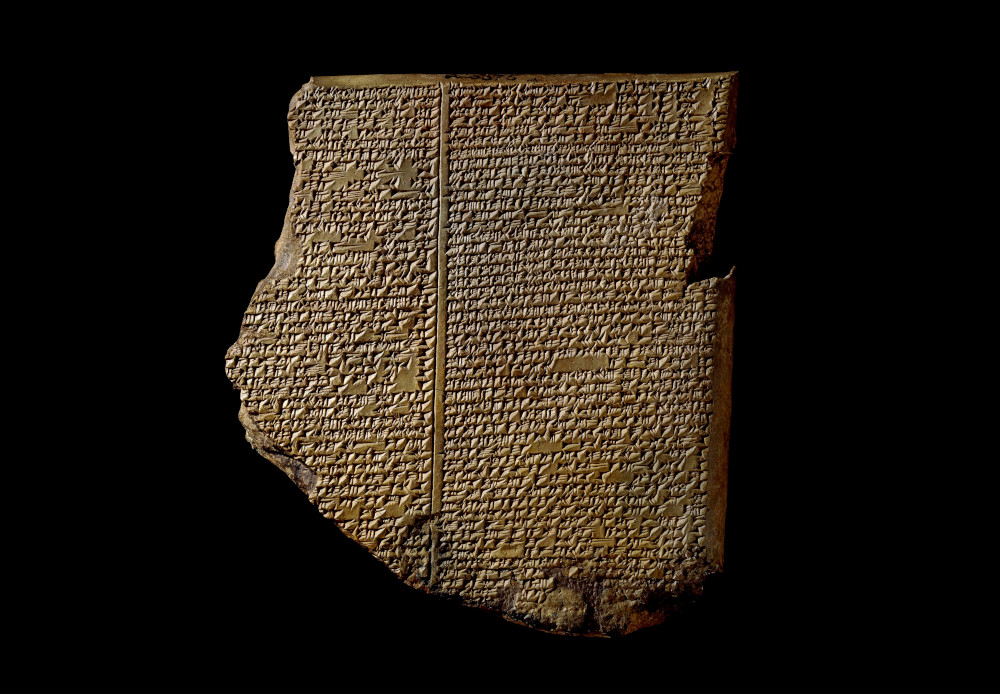

Researchers from the Peter the Great Museum of Anthropology and Ethnography (the Kunstkamera) of the Russian Academy of Sciences in St. Petersburg have examined the existing corpora of written texts from the three pre-Antique cultures of the Near East: Sumero-Akkadian, Ugaritic and Old Testament Jewish, trying to trace the evolution—parallel, intersecting, influencing neighbours and descendants—of stories, motifs and concepts. Part of the work still lies ahead. It is an extremely important attempt to explore how the migration of what at one time were the main components of myths and other sacred texts, at another time magical tales and epic songs, at a third time anecdotes and wandering plots of popular literature, might have looked like in the historical process. Until recently, big data in folklore studies was primarily the encyclopaedic memory of a distinguished professor. Now it is no longer just that. There are many text corpora, and they are replenished from time to time (for example, this project also seeks to study the Eblaite cuneiform texts that were only discovered in the mid-1970s), and the unique database of folklore and mythology of the peoples of the world developed by the project participants is being constantly updated. It is for this reason that the prospects that comparative historical studies in folklore studies and ancient oriental philology offer are too tempting not to pursue. We can only speculate on how this will change science: it has never been practised before, and we do not know how many fundamental assumptions will be confirmed and immutable truths disproved by this kind of research. The important thing is that it will definitely happen.

Assyrian cuneiform tablet with an extract from the Epic of Gilgamesh. Library of Ashurbanipal, Nineveh, 7th century BC.

What is the secret to success of a professional athlete? Scientists know the answer with their VR system for comprehensive athlete assessment. The system complements the conventional training programme and allows amateurs and athletes in rehabilitation to complete their training faster. In the future, the researchers plan to adapt their solution for the development of not only motor skills, but also cognitive skills for anyone interested.

Today, virtual reality technologies are often used in the training of new employees: train drivers, aeroplane pilots, construction workers, in archaeology and other fields. A team of psychologists, mathematicians and programmers from Moscow State University is bringing VR into professional sports. The system they have created makes it possible to assess whether an athlete is making the right decision on the field of play. To do this, the researchers recorded the movements of world champions and made avatars of them. These virtual competitors tell you where they look and move, what position they stand in and how quickly they react to stimuli.

There is no equivalent system in the world that can accurately reproduce the conditions specific to each sport. The methodology is now adapted for ice hockey and freestyle wrestling, but scientists intend to cover more sports and make it as accessible as possible, both to novices and to athletes undergoing rehabilitation. In the future, scientists are looking to make the VR system open to a wide range of users and focus on the development of cognitive skills, such as attention.

Image courtesy of the authors of the research.

Finding a cure for a serious illness is only half the battle: it is also important to get it into the body in a way that does not affect healthy cells. One such delivery system, magnetic nanoparticles, is already starting to be used in medicine, but until recently, it was not known what happens to them after treatment. A team of Russian researchers studied the process for several years and proved the particles safe for the human body.

According to the Ministry of Health of the Russian Federation, there are currently 3.7 million people living in Russia with some form of cancer. Traditionally, broad-spectrum chemotherapeutic drugs have been used to fight cancer, which affect more than just the tumour, leading to a range of serious side effects. The efficacy of treatment can be enhanced by using nanoparticles of a different nature. It is an ideal platform for delivering drugs to malign cells. But it was not clear how the used particles behave—do they disintegrate without harm to health or contaminate the body like spent rocket fragments in space?

A team of scientists from the Institute of Bioorganic Chemistry of the Russian Academy of Sciences, the Moscow Institute of Physics and Technology, Sirius University, Prokhorov General Physics Institute of the Russian Academy of Sciences, National Research Nuclear University MEPhI and Pirogov Russian National Research Medical University were the first to investigate the long-term fate of magnetic nanoparticles in animals. The experts have come up with a new spectral magnetic method for material detection that makes it possible to separate the signal of magnetic nanoparticles from iron, which is normally found in mammals. The magnetic coil affects the nanoparticles in the body, and the magnetic response measures how much iron is left in the particles and how much has already been incorporated into the animal’s proteins. The high sensitivity of this technique and the ability to perform measurements on live mice made it possible for the first time to conduct such large-scale research in this field.

It turned out that nanoparticles accumulate in lysosomes (one of the cell’s components) and are slowly dissolved by acidic environments and enzymes, and that the rate of such destruction depends on the structure of the particle material. When the particles dissolved, excess iron was produced, which the body accumulated in the liver and spleen and used as they saw fit. Overall, the particles proved to be non-toxic to the body.

These discoveries also open the door to the development of nanoparticles to treat certain forms of anaemia.

Ivan Zelepukin, the paper's first author, synthesizes nanoparticles for therapy. Source: Roman Mikheev, MIPT Press Service.